Can You Hear the Difference Between 16bit and 24 Bit

In the modern age of audio, you can't motion for mentions of "Hullo-Res" and 24-bit "Studio Quality" music. If yous haven't spotted the trend in high-end smartphones—Sony's LDAC Bluetooth codec—and streaming services like Qobuz, then you actually demand to get-go reading this site more.

The promise is simple—superior listening quality thanks to more information, aka bit depth. That's 24 bits of digital ones and zeroes versus the puny xvi-scrap hangover from the CD era. Of course, you'll have to pay extra for these higher quality products and services, but more $.25 are surely better right?

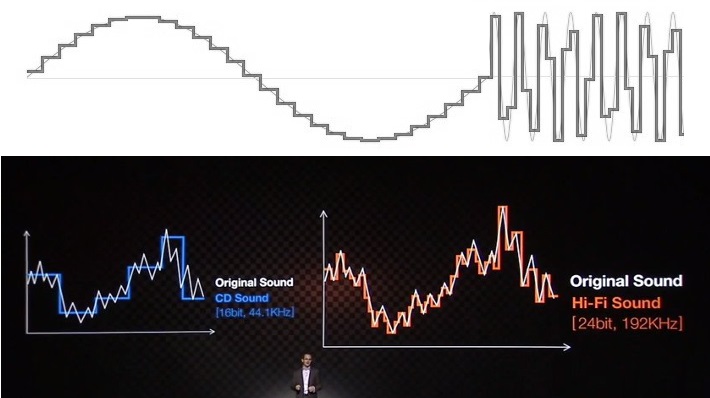

"Low res" audio is often shown off as a staircase waveform. This is not how audio sampling works and isn't what audio looks like coming out of a device.

Not necessarily. The need for college and higher bit depths isn't based on scientific reality, but rather on a twisting of the truth and exploiting a lack of consumer awareness almost the science of sound. Ultimately, companies marketing 24-bit sound have far more to gain in profit than yous do in superior playback quality.

Editor'south notation: this article was updated on July 13, 2021, to update some technical wording and to add together a contents bill of fare.

Fleck depth and sound quality: Stair-stepping isn't a thing

To propose that 24-chip audio is a must-take, marketers (and many others who effort to explain this topic) trot out the very familiar sound quality stairway to heaven. The 16-bit instance always shows a bumpy, jagged reproduction of a sine-wave or other betoken, while the 24-chip equivalent looks beautifully smooth and higher resolution. It's a simple visual assistance, only one that relies on the ignorance of the topic and the science to lead consumers to the wrong conclusions.

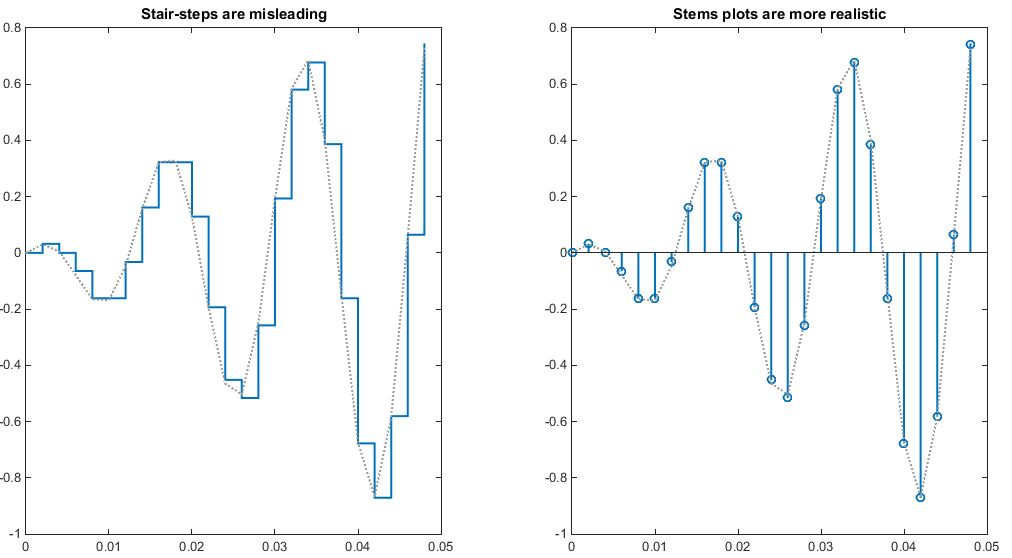

Before someone bites my head off, technically speaking these stair-stride examples practise somewhat accurately portray audio in the digital domain. Nonetheless, a stem plot/lollipop chart is a more accurate graphic to visual audio sampling than these stair-steps. Think well-nigh it this way—a sample contains an amplitude at a very specific signal in time, not an aamplitude held for a specific length of time.

The employ of stair graphs is deliberately misleading when stalk charts provide a more authentic representation of digital audio. These ii graphs plot the same information points simply the stair plot appears much less accurate.

However, it's correct that an analog to digital converter (ADC) has to fit an infinitely variable analog audio point into a finite number of bits. A fleck that falls between two levels has to exist rounded to the closest approximation, which is known as quantization mistake or quantization noise. (Retrieve this, as we'll come up back to it.)

Nonetheless, if you look at the audio output of any sound digital to analog converter (DAC) congenital this century, you won't meet any stair-steps. Not even if you lot output an eight-bit signal. And so what gives?

An 8-chip, 10kHz sine wave output captured from a depression-cost Pixel 3a smartphone. We can meet some dissonance only no noticeable stair-steps so often portrayed by audio companies.

Commencement, what these stair-step diagrams draw, if we apply them to an audio output, is something chosen a zero-order-concur DAC. This is a very simple and cheap DAC technology where a point is switched between various levels every new sample to give an output. This is not used in whatsoever professional or half-decent consumer audio products. You lot might find it in a $5 microcontroller, but certainly non anywhere else. Misrepresenting sound outputs in this way implies a distorted, inaccurate waveform, but this isn't what you're getting.

In reality, a modern ∆Σ DAC output is an oversampled 1-scrap PDM signal (right), rather than a cipher-concur point (left). The latter produces a lower dissonance analog output when filtered.

Audio-grade ADCs and DACs are predominantly based on delta-sigma (∆Σ) modulation. Components of this caliber include interpolation and oversampling, noise shaping, and filtering to smooth out and reduce noise. Delta-sigma DACs convert audio samples into a 1-bit stream (pulse-density modulation) with a very loftier sample rate. When filtered, this produces a smooth output indicate with dissonance pushed well out of audible frequencies.

In a nutshell: modern DACs don't output rough-looking jagged audio samples—they output a bit stream that is noise filtered into a very accurate, smooth output. This stair-stepping visualization is wrong considering of something called "quantization dissonance."

Agreement quantization racket

In any finite system, rounding errors happen. It'southward true that a 24 bit ADC or DAC volition have a smaller rounding error than a xvi-bit equivalent, but what does that actually mean? More importantly, what exercise we actually hear? Is it distortion or fuzz, are details lost forever?

It'due south actually a picayune flake of both depending on whether you're in the digital or analog realms. But the central concept to understanding both is getting to grips with dissonance flooring, and how this improves as bit-depth increases. To demonstrate, let's step back from 16 and 24 bits and expect at very small bit-depth examples.

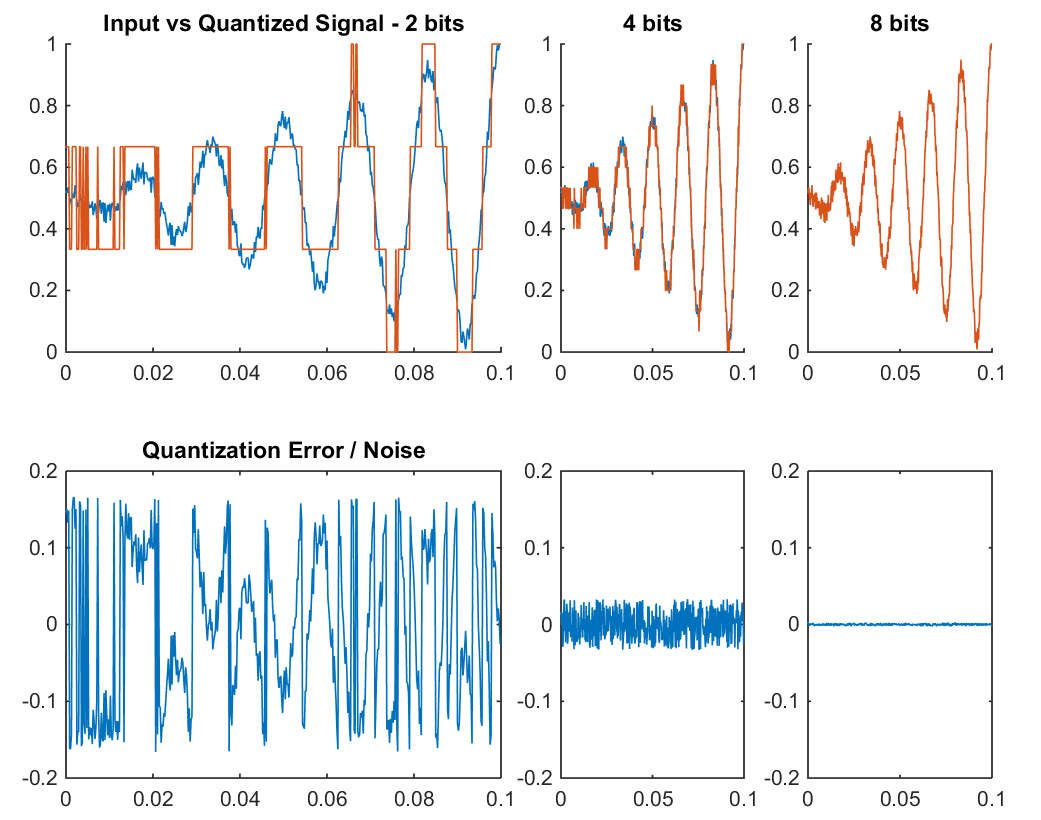

The difference between 16 and 24 bit depths is not the accuracy in the shape of a waveform, but the available limit before digital noise interferes with our signal.

In that location are quite a few things to check out in the example below, so commencement a quick explanation of what we're looking at. We accept our input (bluish) and quantized (orange) waveforms in the meridian charts, with chip depths of ii, 4, and 8 bits. We've as well added a small amount of noise to our signal to improve simulate the existent world. At the bottom, we accept a graph of the quantization error or rounding noise, which is calculated past subtracting the quantized signal from the input bespeak.

Quantization noise increases the smaller the bit depth is, through rounding errors.

Increasing the fleck depth conspicuously makes the quantized signal a improve match for the input indicate. However that's non what'due south important, notice the much larger error/noise signal for the lower chip depths. The quantized signal hasn't removed data from our input, it'due south actually added in that error signal. Condiment Synthesis tells us that a indicate tin be reproduced by the sum of any other two signals, including out of phase signals that act equally subtraction. That's how dissonance cancellation works. And then these rounding errors are introducing a new noise signal.

This isn't just theoretical, you can really hear more and more noise in lower bit-depth audio files. To understand why, examine what's happening in the 2-chip case with very small signals, such as before 0.two seconds. Click here for a zoomed-in graphic. Very minor changes in the input signal produce large changes in the quantized version. This is the rounding error in activity, which has the event of amplifying small-scale-point dissonance. Then once again, noise becomes louder as bit-depth decreases.

Quantization doesn't remove data from our input, it actually adds in a noisy error indicate.

Think nigh this in reverse besides: information technology's not possible to capture a signal smaller than the size of the quantization step—ironically known as the least significant flake. Small signal changes have to leap upwardly to the nearest quantization level. Larger fleck depths have smaller quantization steps and thus smaller levels of dissonance amplification.

Well-nigh importantly though, note that the amplitude of quantization noise remains consistent, regardless of the amplitude of the input signals. This demonstrates that noise happens at all the different quantization levels, so there's a consistent level of noise for any given scrap-depth. Larger bit depths produce less dissonance. We should, therefore, think of the differences betwixt 16 and 24 fleck depths not as the accuracy in the shape of a waveform, merely as the available limit earlier digital noise interferes with our betoken.

Chip depth is all well-nigh noise

Kelly Sikkema Nosotros require a scrap-depth with enough SNR to adjust for our groundwork noise to capture our sound as perfectly as information technology sounds in the real world.

Now that nosotros are talking about bit depth in terms of noise, permit'due south get back to our higher up graphics one last time. Notation how the viii-bit example looks similar an almost perfect match for our noisy input signal. This is because its 8-bit resolution is actually sufficient to capture the level of the background noise. In other words: the quantization pace size is smaller than the amplitude of the noise, or the indicate-to-noise ratio (SNR) is meliorate than the background racket level.

The equation 20log(2n), where due north is the flake-depth, gives us the SNR. An 8-scrap bespeak has an SNR of 48dB, 12 bits is 72dB, while 16-bit hits 96dB, and 24 bits a whopping 144dB. This is important considering we now know that we only need a bit depth with enough SNR to adjust the dynamic range between our background noise and the loudest signal we want to capture to reproduce sound equally perfectly as it appears in the real world. Information technology gets a little tricky moving from the relative scales of the digital realm to the sound force per unit area-based scales of the physical world, and then we'll try to keep it simple.

CD-quality may be "only" 16 bit, only information technology'southward overkill for quality.

Your ear has a sensitivity ranging from 0dB (silence) to about 120dB (painfully loud sound), and the theoretical ability (depending on a few factors) to discern volumes is just 1dB apart. So the dynamic range of your ear is nearly 120dB, or shut to 20 $.25.

However, you can't hear all this at one time, as the tympanic membrane, or eardrum, tightens to reduce the amount of volume actually reaching the inner ear in loud environments. Yous're also not going to exist listening to music anywhere most this loud, because you'll go deaf. Furthermore, the environments you lot and I listen to music in are non as silent as healthy ears tin can hear. A well-treated recording studio may take u.s. down to below 20dB for background racket, but listening in a humming living room or on the bus will plain worsen the conditions and reduce the usefulness of a loftier dynamic range.

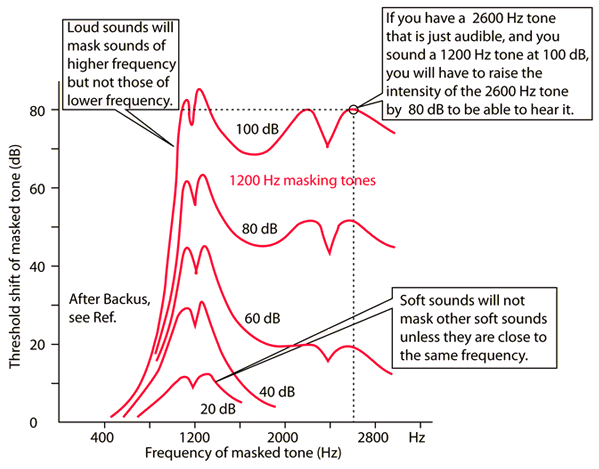

The human being ear has a huge dynamic range, but merely not all at once. Masking and our ear'southward own hearing protection reduces its effectiveness.

On acme of all that: as loudness increases, higher frequency masking takes effect in your ear. At low volumes of 20 to 40dB, masking doesn't occur except for sounds close in pitch. Even so, at 80dB sounds below 40dB will be masked, while at 100dB sound below 70dB are impossible to hear. The dynamic nature of the ear and listening material makes it difficult to give a precise number, but the existent dynamic range of your hearing is probable in the region of 70dB in an average environment, down to just 40dB in very loud environments. A scrap depth of just 12 bits would probably accept most people covered, so sixteen-bit CDs give united states of america plenty of headroom.

hyperphysics High-frequency masking occurs at loud listening volumes, limiting our perception of quieter sounds.

Instruments and recording equipment introduce dissonance as well (especially guitar amps), even in very quiet recording studios. There accept also been a few studies into the dynamic range of different genres, including this 1 which shows a typical 60dB dynamic range. Unsurprisingly, genres with a greater affinity for quiet parts, such equally choir, opera, and piano, showed maximum dynamic ranges around 70dB, while "louder" rock, popular, and rap genres tended towards 60dB and below. Ultimately, music is only produced and recorded with so much fidelity.

You might be familiar with the music industry "loudness wars", which certainly defeats the purpose of today's Hi-Res audio formats. Heavy use of pinch (which boosts noise and attenuates peaks) reduces dynamic range. Modern music has considerably less dynamic range than albums from 30 years ago. Theoretically, modern music could be distributed at lower chip rates than one-time music. You lot can check out the dynamic range of many albums here.

16 bits is all you need

This has been quite a journey, but hopefully, yous've come away with a much more nuanced moving picture of bit depth, noise, and dynamic range, than those misleading stair-instance examples you then often run into.

Bit depth is all about noise, and the more bits of information you have to store audio, the less quantization noise will be introduced into your recording. By the same token, you'll likewise be able to capture smaller signals more accurately, helping to drive the digital noise floor below the recording or listening surroundings. That'due south all we need bit depth for. There's no benefit in using huge fleck depths for audio masters.

Alexey Ruban Due to the way noise gets summed during the mixing procedure, recording sound at 24 $.25 makes sense. It's non necessary for the final stereo master.

Surprisingly, 12 bits is probably plenty for a decent sounding music master and to cater to the dynamic range of most listening environments. Yet, digital audio transports more than but music, and examples like speech or ecology recordings for Television set tin make utilize of a wider dynamic range than near music does. Plus a little headroom for separation between loud and repose never injure anyone.

On rest, 16 bits (96dB of dynamic range or 120dB with dithering practical) accommodates a wide range of sound types, as well as the limits of human hearing and typical listening environments. The perceptual increases in 24-flake quality are highly debatable if not simply a placebo, as I hope I've demonstrated. Plus, the increment in file sizes and bandwidth makes them unnecessary. The type of compression used to shrink downward the file size of your music library or stream has a much more noticeable impact on sound quality than whether it's a 16 or 24-chip file.

Source: https://www.soundguys.com/audio-bit-depth-explained-23706/

Belum ada Komentar untuk "Can You Hear the Difference Between 16bit and 24 Bit"

Posting Komentar